Inspect Evals Register (Beta) User Guide

The Inspect Evals Register aims to make it easier to submit, discover, and run evals that live in their authors’ own repositories. It lists each eval in the inspect_evals docs with a pointer to a pinned commit of the upstream repo.

This guide outlines how to register (and update) evaluations in the Inspect Evals docs. For background on why the project moved to a register model, see About the Register.

Contents

How to run externally-managed evals

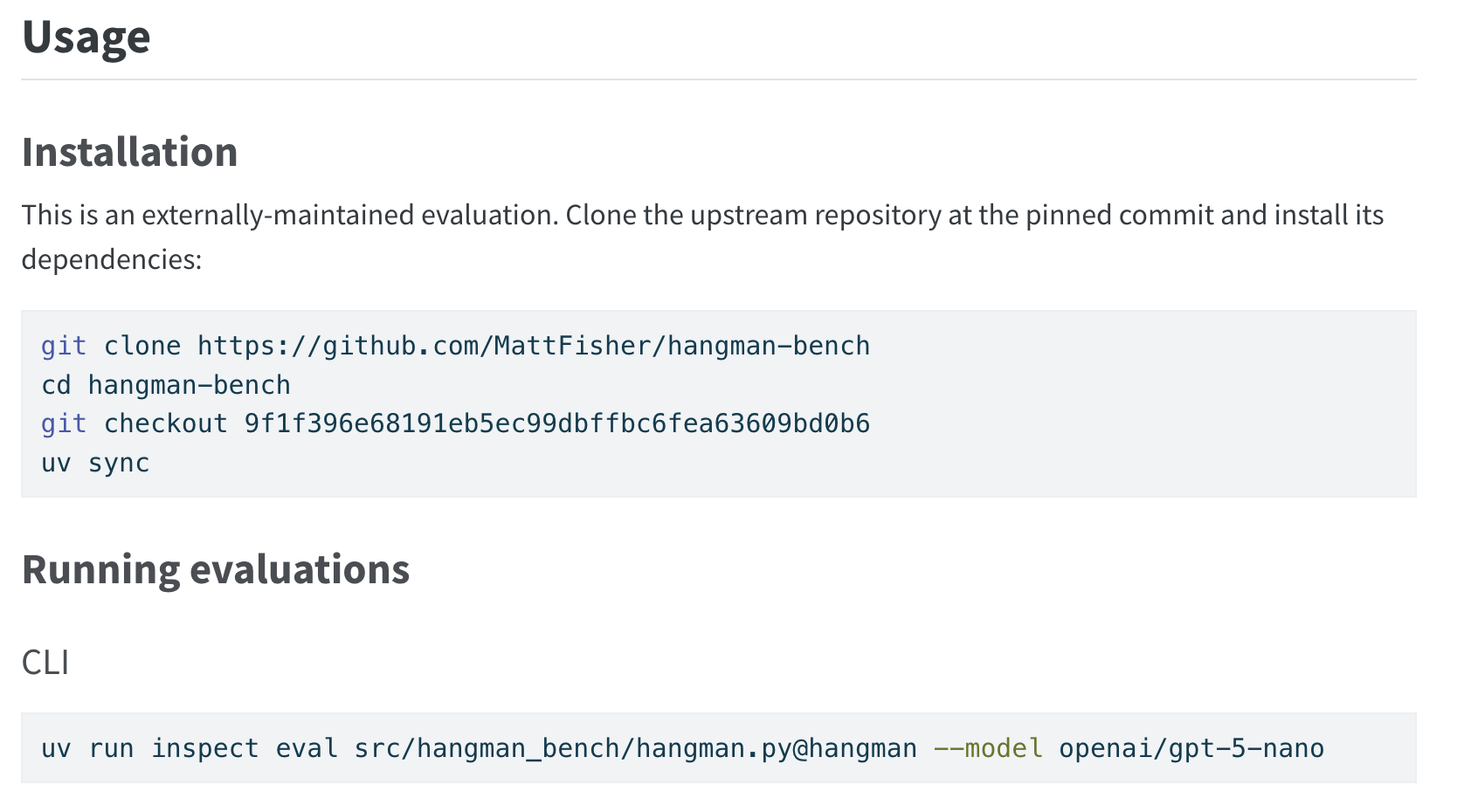

Users run registered evals by cloning the upstream repository at the pinned commit, installing its dependencies, and running inspect eval against the task file. Our doc auto-generation creates a usage section that walks through these steps for each registered eval. See below as an example:

inspect eval commands a user runs to execute a registered eval.How to add an evaluation to the register

We designed the submission process to be lightweight. However, to plug into Inspect Evals’ docs and workflows, the upstream eval repo must meet the following requirements:

Upstream repo requirements

- Have a (

pyproject.toml) with a[project]table so it can be installed viauv sync(or any PEP 517-compatible installer). We useuvin the generated commands for consistency with the rest ofinspect_evals, but nothing upstream depends on uv specifically.- Here is an example

pyproject.tomlfrom the Generality Labs eval implementation template. - See HangmanBench for a filled out example.

- Here is an example

- Declare

inspect_aias a dependency. - Define each task with the

@taskdecorator frominspect_ai. - External assets are hosted and pinned in stable, version-controlled storage.

Steps to make the new-submission PR

Before registering, please read the Contributor Guide for our general guidelines on what we want from an evaluation, including scoping, validity, scoring, and task versioning. These are not mandatory for registry acceptance, in the same way that we required them for acceptance into Inspect Evals. Over the coming weeks we will be introducing information to the registry around adherence to these checks - individual users can decide how much they care about these scores when searching the registry for evaluations to run.

Create

register/<eval_name>/eval.yaml. Copyexample_eval.yamlas a starting point — it has every field with inline comments explaining what’s required and what’s optional. Seehangman-bench/eval.yamlfor a real-world filled-in example.The schema is defined by the

EvaluationReport,EvaluationReportResult, andEvaluationReportMetricpydantic models — see those classes for the authoritative list of fields and types. Per-rowmetricsis a flexiblelist[{key, value}](≥1 entry required), so eval-specific metric names (accuracy,stderr,benign_utility,targeted_asr, etc.) appear as table columns without schema changes. The block mirrors the output of the evaluation report workflow.For multi-task evals, set

taskon each row to the task name; the renderer emits one table per task. Use a row withouttaskto add an “Overall” aggregate.commitcaptures the upstream SHA the run was performed against — it may differ fromsource.repository_commitif the pin has since been bumped — andversionpins the eval’s reported version at the same level for reproducibility.From the repo root, run:

uv run python tools/generate_readmes.py --create-missing-readmesThis validates your

eval.yamland generatesREADME.mdnext to it — the README is fully auto-generated fromeval.yamland links back to the upstream repo for everything else. (If you havemakeinstalled,make checkruns this along with the rest of the repo’s lint/format checks, but these checks are generally unnecessary for a register PR.) Because the generated page defers to upstream for details, make sure your upstream repo’s README covers the dataset, the scorer, any task parameters, and how the eval was validated — readers landing on the inspect_evals page will follow the link back expecting to find that context.Check for what assets (datasets, model weights, scoring corpora) your eval uses and ensure they are hosted somewhere with version-control and pinned. For example, Hugging Face assets should be loaded in with an explicit

revision=and GitHub raw URLs should include a commit SHA.- Assets hosted in unversioned Huggingface loads, branch-based URLs, or personal cloud-drive links can change without notice.

Open a PR. The reviewer will ping anyone listed under

source.maintainersfor acknowledgement before merging.

How to update an evaluation in the register

When the upstream eval changes and you want the register to reflect it:

- Upstream maintainers land their changes in the upstream repo.

- Bump

source.repository_commitin the Inspect Evals Register and re-runuv run python tools/generate_readmes.py --create-missing-readmes. This tells users ofinspect_evalsthat you have vouched for the eval at that particular commit. If the update changes the evaluation’s behaviour, consider regenerating theevaluation_reportto record new results. - Open a PR here. Both you and anyone listed under

source.maintainerswill be pinged before merging.

Automated register submission checks

Whenever a PR is opened (or marked ready for review) that touches register/<name>/eval.yaml, the register-submission workflow runs. PRs that bundle register edits with non-register changes (e.g. schema migrations that touch metadata.py alongside the register entries) are allowed — they go through both this workflow and the standard agentic code review.

The workflow runs in two passes. The lightweight automated checks always run; the heavyweight agentic code review only runs when the diff actually shifts what an entry points at or claims about the upstream code.

Always-run automated checks

| Check | What it confirms |

|---|---|

| Size | At most 10 eval.yaml entries are added in a single PR (beta cap). |

| Schema | eval.yaml parses against the ExternalEvalMetadata Pydantic model — required fields present, types valid. |

| Repository access | source.repository_url resolves to a public GitHub repo. |

| Commit pinning | source.repository_commit is a full 40-char hex SHA that exists on the remote. |

| Duplicate | The eval id (directory name) doesn’t collide with an existing internal or register eval, and declared task names don’t collide with any existing task. |

Heavy automatic code review (significant changes only)

The agentic code review runs when the diff is register-significant — a new entry, a source.repository_url / source.repository_commit change, a tasks[*] rename or path change, or a title / description edit. Field tweaks like abbreviation, tags, source.maintainers, or evaluation_report updates aren’t significant on their own, so the heavy pass is skipped for them.

| Check | What it confirms |

|---|---|

| Task function | A @task-decorated function with the declared name exists at each task’s task_path in the upstream repo. |

| Runnability | Each task file imports from inspect_ai and constructs a Task with a dataset and scorer. |

| Description accuracy | title and description in eval.yaml reflect what the code actually does. |

| Security | No cryptocurrency mining, credential exfiltration, arbitrary shell execution, or sandbox="local" paired with model-produced code. |

| Fuzzy duplicate | Surfaces existing evals with similar names or titles. Non-blocking — acknowledge in a PR comment if the warning is intentional. |

A failed check posts a comment on the PR. There are two flavours, depending on which check failed:

- Automated checks (size, schema, repository access, commit pinning, duplicate) — the comment names what failed. Push a fix and the workflow re-runs automatically. There’s no override.

- Agentic code review checks (task function, runnability, description accuracy, security) — the comment carries

[REGISTER_BLOCK], or[REGISTER_SECURITY_BLOCK]for security findings. Push a fix and re-run, or a maintainer can post[REGISTER_BLOCK_OVERRIDE]/[REGISTER_SECURITY_OVERRIDE]to waive the block.

If you believe a block was raised in error, comment /appeal to notify maintainers via Slack.

If you need to kick off the workflow manually — e.g. on a draft PR or to retry after an external failure — the PR author or a maintainer can comment /register-submit.

Other contributions

For other types of changes, please see the contribution guide. In particular, if you want to suggest a change to the submission process, the automated checks, or the functionality of the Inspect Evals docs itself, please open an issue.