About the Register

Inspect Evals provides a way to share Inspect eval implementations with the broader community. However, as the number of evaluations grew, we found that maintaining quality and compatibility in a single package was not sustainable. As of the 8th of May 2026, Inspect Evals will stop accepting eval code submissions into /src. To register new evals, contributors can submit a simple .yaml file that points to an externally managed repo that hosts the eval. This move towards a distributed register model mirrors the approach taken by other projects that faced similar problems, such as Helm/charts, Terraform Registry, and Docker Official Images.

[!TIP] This doc primarily serves as a background explainer for contributors who want to understand why we moved to a register model. Seeking a guide to help register an evaluation whose code lives in a separate upstream repository? See the Submission Guide.

Table of Contents

Background

Where Inspect Evals Started

Inspect Evals was launched in May 2024 as a collection of example evaluations for the Inspect AI framework. It was intended to foster community collaboration and make it easy to run, experiment and share Inspect AI evaluations. In the past, we’ve done this by accepting PRs which submit evals directly to src/, with the intention that they can be installed and run locally from the Inspect registry. What started as a project to show a handful of evaluation examples now hosts 120+ evaluation implementations and 200+ Inspect AI tasks; with the growth, we started having to make trade-offs between opposing requirements.

What we have learned

There are at least two opposing requirements which mean the existing model (“Inspect Evals 1.0”) is not a practical long-term solution. These requirements are:

Stability/reproducibility vs ease-of-use: An essential requirement of evals is that they are stable over time and have reproducible results. Naively pinning versions creates dependency conflicts, and other solutions such as containerisation/virtual environments don’t yet provide the same low-friction developer or user experience.

Quality vs accessibility: Users want evals that are reliable and accurate; there is no easy way to provide guarantees other than thorough quality assurance checks. Imposing this QA process as a prerequisite for every eval submission creates unreasonable amounts of friction, and isn’t a scalable way to help users identify evals that meet their needs.

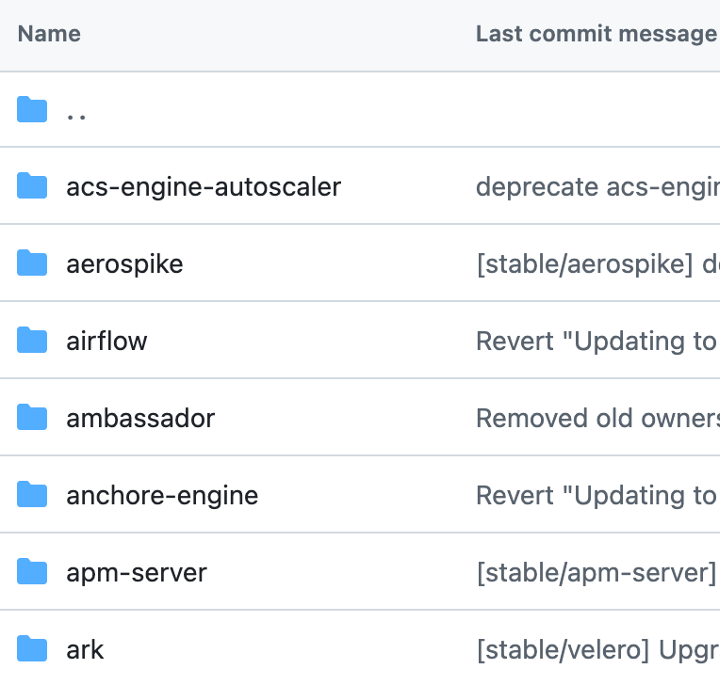

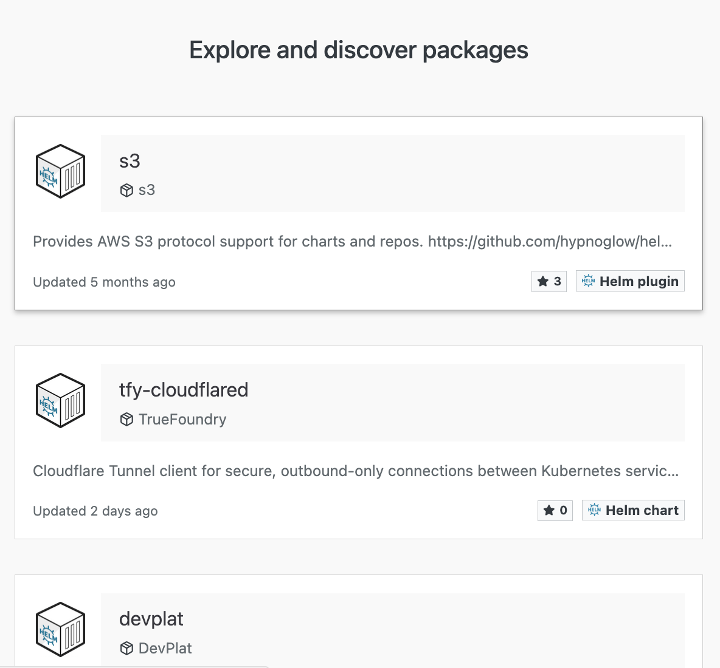

The issues we encountered aren’t unique to Inspect Evals. An informative example was Helm’s helm/charts. It also started as a mono-repo which eventually grew too large to be sustainable. They then moved to a distributed model where charts live in upstream repos and a central registry (Artifact Hub) handles discovery.

Monorepo: helm/charts |

Distributed Register: Artifact Hub |

|---|---|

|

|

Introducing: The Inspect Evals Register

As of the 8th of May 2026, new evaluations must be hosted in an upstream repo and registered to Inspect Evals via a simple .yaml file. Registered evals will be discoverable via the Inspect Evals documentation, and we have designed the process so that users get the instructions to make running the evaluation straightforward.

We believe that moving towards this design will:

- Simplify dependency management, each eval is installed independently which means adding or updating one eval can’t break another (easier to have stable eval versions).

- Lower the barrier to sharing evals, as submissions no longer have to go through our lengthy QA process. The results of most QA tests don’t block submissions but provide users with information on the quality and reliability so that they can make informed choices.

- Keep evals discoverable and accessible through the Inspect Evals documentation.

The process is as follows:

Future Plans

Depending on community feedback, things we may investigate include:

- Currently the Eval Quality Assurance (QA) checks on external eval submissions are fairly minimal to help make sure the process is easy. In the future, we are considering adding more QA checks to:

- Implement baseline standards which determine whether evals get added to the register.

- ‘Eval quality’ building on top of the existing autolint checks and agent-runnable checks we already implement. These don’t block a submission to the register, but quickly communicate to a user which standards and best practices a given eval meets. We may present this information as an aggregated score or a checklist visual.

- Updates to the Inspect Evals Docs, driven by user needs and wants.

- Improvements to the Eval Implementation Template maintained by Generality Labs to continue supporting eval implementors.

If you have any suggestions or ideas on things you would find useful, please let us know by raising an issue!

FAQ

To add as we get more questions!

I use Inspect Evals to run benchmarks - what changes for me?

Existing evals hosted in Inspect Evals will continue to work as they previously did. We are expanding Inspect Evals so that you can find both internal and externally-maintained evals on the Inspect Evals Documentation. Each eval’s documentation page includes the commands you need to run the eval. For the externally-managed evals, you can run them by installing the eval directly from its upstream repo rather than installing the inspect_evals package.

I already have an eval implemented in my own repo — how do I get it listed?

Your eval can be discoverable in the Inspect Evals Documentation by opening a PR here to submit the .yaml file! The register/README.md details the 4 simple requirements for your eval repo: 1. having Inspect AI as a dependency, 2. using the @task decorator, 3. including pyproject.toml, and 4. pinning external assets. Please open a PR with the register/<eval_name>/eval.yaml pointing to your repo at a pinned commit to get the process started! We currently support up to 10 tasks being submitted in one PR.

I am planning to develop, (or am in the middle of developing) an eval to contribute to Inspect Evals - what should I do?

We ask that you build your eval in your own repo then follow the steps above. Generality Labs has developed an Eval Implementation Template to help you get started - you don’t have to use it but it contains useful resources such as AI skills to automate elements, a checklist and automated checks to help you implement high-quality evals.