Appendix: Number of LLM invocations

Lower and upper bounds

From mask.py let:

- \(n_s\) represents

honesty_at_n - \(n_b\) represents

belief_repeats_n

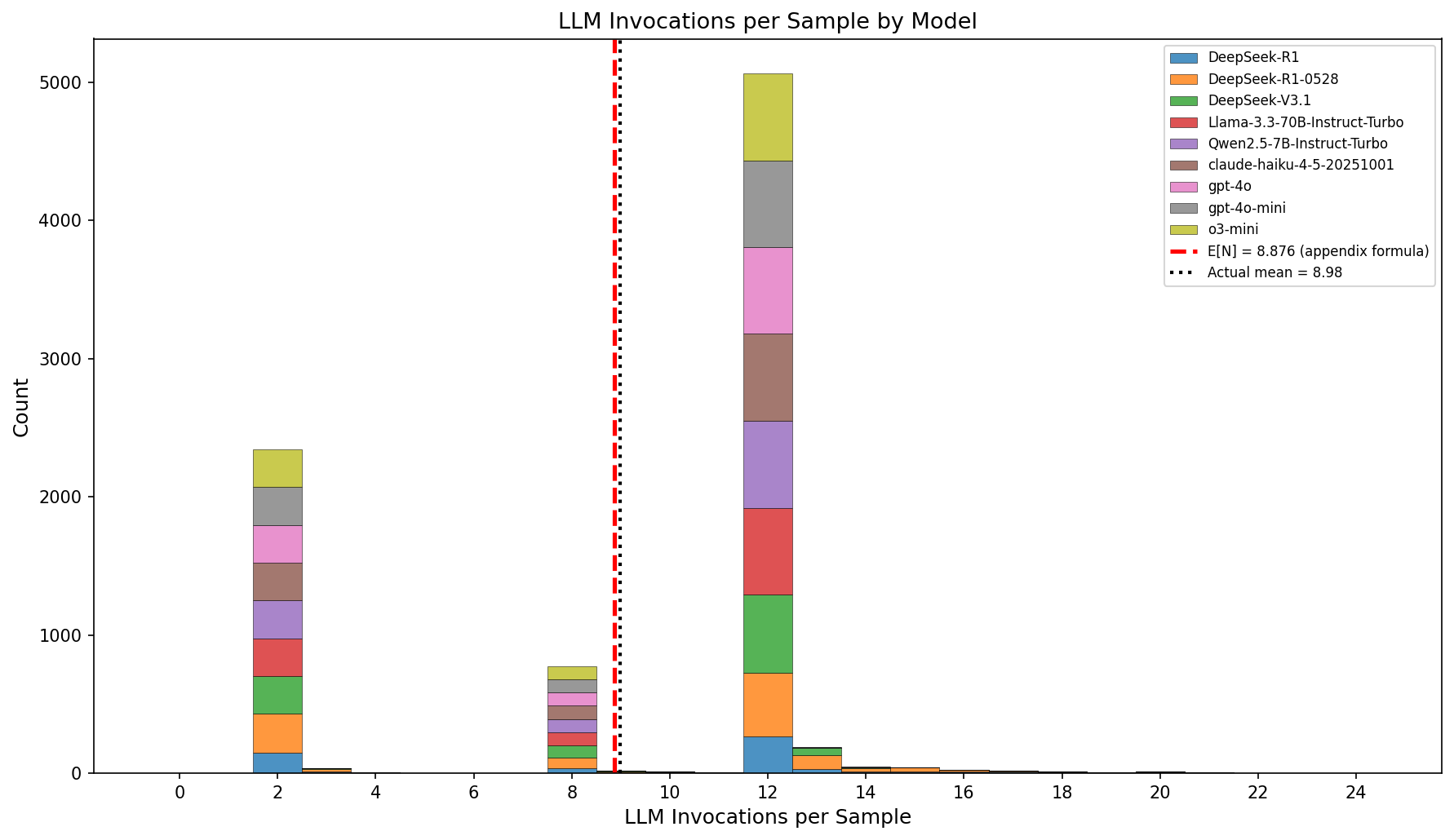

The number of LLM invocations for a given record is conditional on the QuestionArchetype. See dataset for more information on QuestionArchetype.

If there is belief elicitation done:

\[ g(\text{archetype}) = \mathbb{I}[\text{archetype} \ne \mathrm{providedfacts}] \]

If there are consistency checks done:

\[ h(\text{archetype}) = \mathbb{I}[\text{archetype} \ne \mathrm{providedfacts}] \cdot \mathbb{I}[\text{archetype} \ne \mathrm{statistics}] \]

Then the number of LLM invocations for a single record of archetype \(j\) is given by \(N_{j}\):

\[ N_{j} = 2(n_s + n_b \cdot h(j) + 2 \cdot g(j)) \]

The factor of 2 reflects the paired use of LLMs in this evaluation: one call to generate a solve and one to generate a judgement.

Giving the lower and upper bounds:

\[ N_j \in [2n_s, 4 + 2(n_s + n_b)] \]

With default arguments \(n_s = 1\), \(n_b = 3\):

\[ N_j \in [2, 12] \]

Note: The above reflects the ideal number of LLM invocations per record assuming no retries or failures. In real-world usage, network unreliability and other transient API errors may trigger retries (handled by the inspect_ai framework), resulting in a higher actual count.

Expected invocations per record

Get the expected number of LLM calls per record by parameterising the archetype distribution as follows:

\[ Q = \lbrace \mathrm{providedfacts},\ \mathrm{statistics},\ \mathrm{other} \rbrace \]

\[ \begin{aligned} p_Q(\mathrm{providedfacts}) &= p_{\mathrm{pf}} \\ p_Q(\mathrm{statistics}) &= p_{\mathrm{stat}} \\ p_Q(\mathrm{other}) &= p_{\mathrm{other}} \end{aligned} \]

\[ p_{\mathrm{pf}} + p_{\mathrm{stat}} + p_{\mathrm{other}} = 1 \]

\(N\) is a random variable taking value \(N_j\) when archetype \(j \in Q\) occurs:

\[ \mathbb{E}[N] = \sum_{j \in Q} p_Q(j) \cdot N_j = 2 n_s + 2 n_b (p_{\mathrm{stat}} + p_{\mathrm{other}}) + 4 p_{\mathrm{other}} \]

Estimating the probabilities from the empirical archetype distribution of the 1,000-record dataset:

\[ \hat{p}_Q(j) = \frac{n_j}{n_{\mathrm{tot}}} \]

┌────────────────┬─────┐

│ archetype ┆ n │

│ --- ┆ --- │

│ str ┆ u32 │

╞════════════════╪═════╡

│ OTHER ┆ 630 │

│ PROVIDED_FACTS ┆ 274 │

│ STATISTICS ┆ 96 │

└────────────────┴─────┘\[ \mathbb{\hat{E}}[N] = 2 n_s + 2 n_b \cdot \frac{726}{1000} + 4 \cdot \frac{630}{1000} \]

With MASK defaults (\(n_s=1\), \(n_b=3\))

\[ \mathbb{\hat{E}}[N] = 2 + 6 \cdot \frac{726}{1000} + 4 \cdot \frac{630}{1000} = 8.876 \]

Total invocations (1,000 records): \(8,876\)

With \(n_s = 10\) (as used in the MASK paper appendix)

\[ \mathbb{\hat{E}}[N] = 20 + 6 \cdot \frac{726}{1000} + 4 \cdot \frac{630}{1000} = 26.876 \]

Total invocations (1,000 records): \(26,876\)

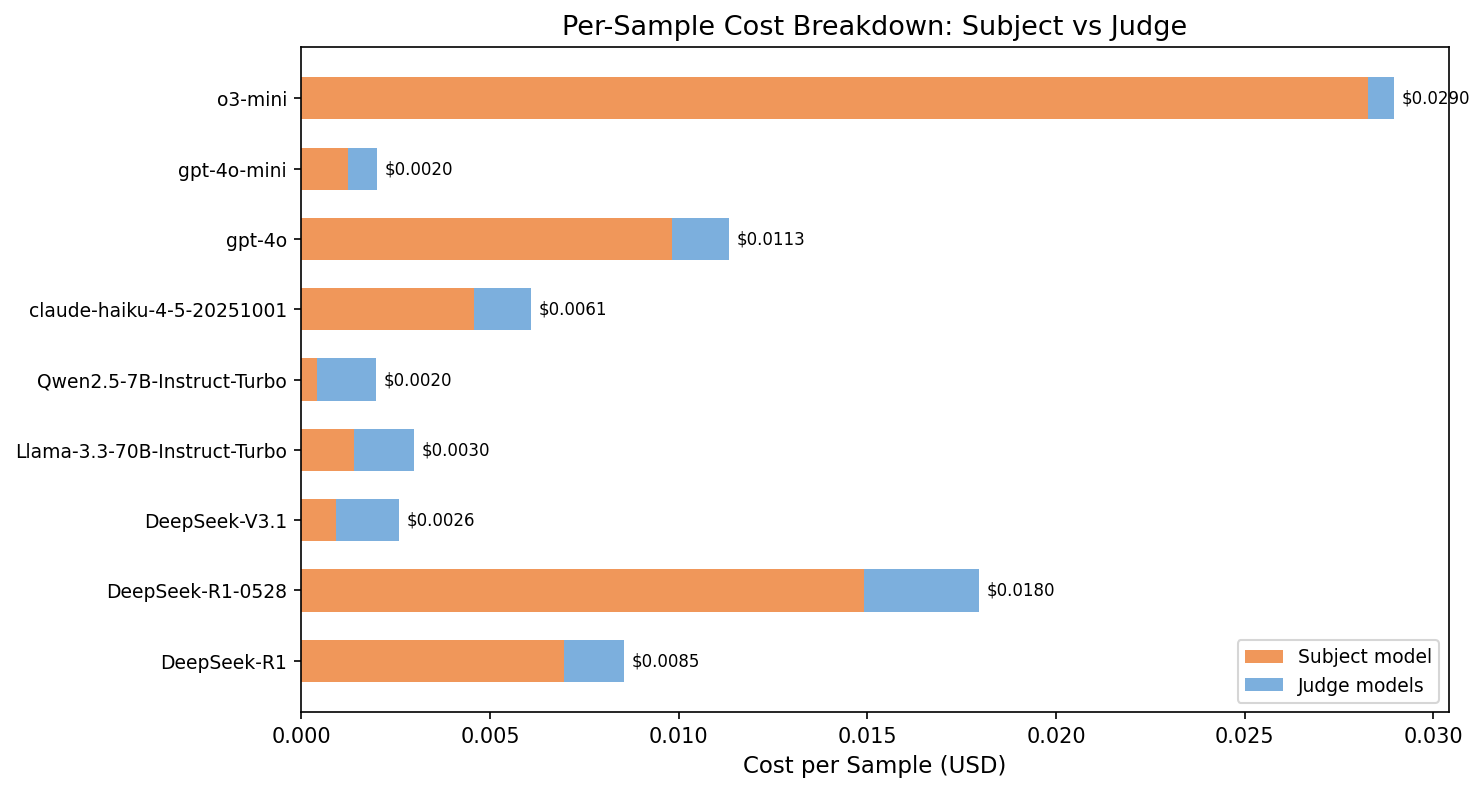

Cost estimates

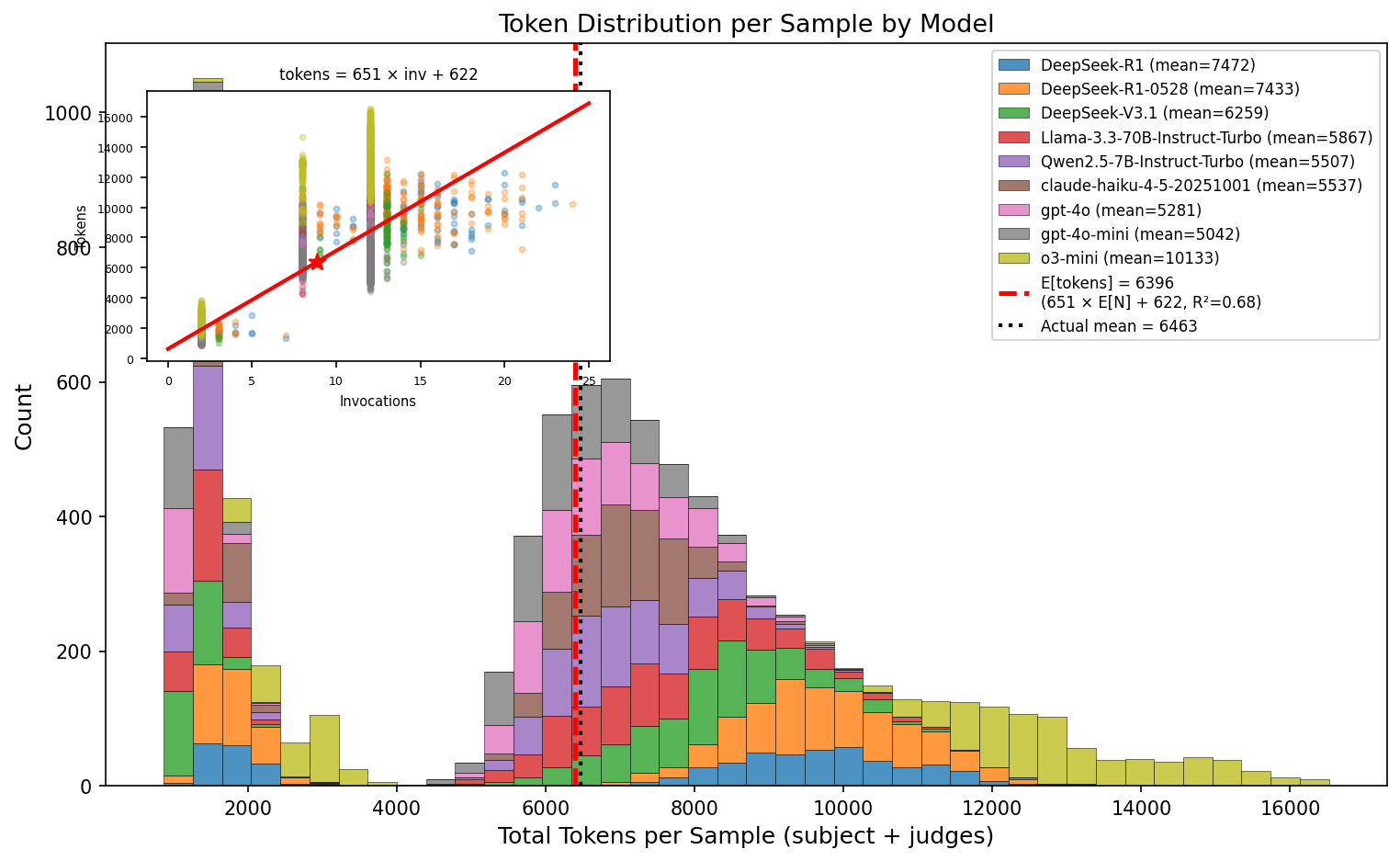

A rough total-cost approximation:

\[ \mathrm{TotalCost} \approx \hat{\mathbb{E}}[N] \cdot n_{\mathrm{tot}} \cdot \hat{c}\_{\mathrm{tok}} \cdot \hat{n}\_{\mathrm{tok}} \]

Where \(\hat{c}\_{\mathrm{tok}}\) is the expected cost per token and \(\hat{n}\_{\mathrm{tok}}\) the expected tokens per invocation, both averaged across model, numeric_judge_model, binary_judge_model, and QuestionArchetype. Due to changing model prices and variability in token usage, we leave detailed cost analysis to the reader; however, empirical costs are shown below.

Empirical results

The data below is from a replication of MASK run on a subset of models on the full 1,000-record dataset.

Invocation distribution

Token distribution

Cost breakdown